The proliferation of Artificial Intelligence (AI) across both sides of the recruitment process promises increased efficiency, cost savings and improved accuracy, but it also poses significant challenges for leaders in the HR & TA space.

This newly updated guide provides fresh context and insight for 2026, reflecting on the proliferation of AI use by candidates and TA professionals in a competitive job market.

Foreword

Understanding Artificial Intelligence

The Intersection of AI and Recruitment

The Benefits of AI in Recruitment

The Candidate Experience: AI from an Applicant's Perspective

Challenges and Limitations of AI in Recruitment

Future Perspectives: AI in Recruitment Going Forward

Conclusion

FAQs

We first published our guide to AI in Talent Acquisition in 2023, when the launch of OpenAI’s ChatGPT created a surge in interest in AI tools. Just three years later the proliferation of AI technology has expanded significantly, with increased use from both sides of the recruiting process. Candidates are responding to the need to tailor their applications in a competitive market, and stretched HR functions overwhelmed by the volume of candidates are using screening software to shortlist and even choosing AI-led interviews to sift in the initial stages.

As organisations grapple with rising hiring demands, skills shortages and tighter budgets, AI offers the promise of faster processes, richer insight and a more consistent experience for candidates and hiring managers alike. Yet that promise comes with important questions around fairness, transparency and the proper role of human judgment in high‑stakes decisions. KPMG found almost a third of executives are worried about an overreliance on AI when making people decisions, while Gartner predicts 30% of enterprises will face declining decision-making quality due to AI reliance.

In March 2023, a Guardian report found that one-third of Australian businesses were using AI tools in recruitment, today 93% of Fortune 500 HR teams have started using AI tools to streamline recruitment tasks. Yet fewer than 1% say they are fully AI-ready.

The latest wave of research has found that AI is now used to support sourcing, screening, scheduling, assessments and onboarding in many larger organisations, not just experimental pilots, with adoption rates above 60% in some employer samples.

This is a sharp increase from earlier in the decade, but deep, integrated use of AI across the hiring lifecycle remains limited and uneven between organisations, building on earlier research showing that adoption increases with company size, with larger employers more likely to deploy AI and automation tools in recruitment. Studies show that AI tools can significantly reduce recruiters' time spent on initial screening and scheduling, freeing teams to focus on relationship‑building and advising hiring managers rather than on manual processing.

At Talent Insight Group, we see AI as an enabler rather than a replacement. The most successful organisations we work with are those that use AI to free their people from repetitive tasks so they can focus on what they do best: understanding context, building relationships and making nuanced decisions about talent. They are also the ones asking the right questions of their technology partners, demanding evidence for claims, and taking responsibility for how tools are implemented and governed.

This guide is designed to help HR and talent leaders cut through the hype and make informed, practical decisions about AI in recruitment. It outlines where AI can genuinely add value today, where its limitations and risks lie, and what good practice looks like as regulation and expectations evolve. Our hope is that it supports you in building recruitment processes that are not only more efficient, but also fairer, more transparent and more human‑centred in the years ahead.

Tim Gleave & David Steel

Joint Managing Directors, Talent Insight Group

Artificial Intelligence is often misunderstood and confused with simpler automation technologies. It’s important to understand the different aspects of these technologies and their applications to understand both the possibilities and our stage on the implementation journey.

AI applies analysis and logic techniques, including Machine Learning, to interpret events, support and automate events and make decisions. In recruitment, modern AI systems often combine Machine Learning, large language models, and Natural Language Processing to understand CVs, job descriptions, and candidate communications in more human-like ways.

Example HR use case:

Screening applications based on a job description using all available data to recommend suitable candidates.

Follows a set of predefined rules to carry out actions. It follows the format of if x action happens, do y. Many “AI recruiting tools” in the market are still mainly rule-based automation with light ML, which is important to understand when evaluating vendor claims.

Example HR use case:

Sending reminder emails and surveys.

Machine Learning is a part of AI. It describes learning from past data to improve answers and predictions.

Example HR use case:

Analysing past and current employee performance to predict similar applicants' performance in role.

NLP can also be used as part of AI. This technology can understand and analyse conversational language for sentiment and accuracy.

Example HR use case:

Evaluating applications and screening calls.

Traditional chatbots are trained via Machine Learning to recognise and respond to questions. This can often be simplistic, using basic questions and answers, or advanced, using conversational flows and pulling data from other systems. AI-powered chatbots can learn from previous conversations to improve without human input.

Example HR use case:

Applicant information bot

Generative AI uses existing information to create entirely new content. This could be in any format, from copy and imagery to audio, video and presentations. Here, AI applies analysis and logic techniques, including Machine Learning, to interpret events, support and automate events and take decisions.

Example HR use case:

Creating job descriptions and adverts, drafting outreach messages and interview questions, and generating personalised candidate communications at scale, which recruiters then review and refine.

We are still in the early stages of AI development and implementation. Familiar technologies, including automation, Machine Learning and Natural Language Processing, are already integrated into existing systems and part of the business toolkit.

As AI develops, we will repeat the cycle of technological breakthroughs followed by a proliferation of tools, then rationalisation and integration. AI experts call our current phase ‘Narrow AI’ as it is focused on achieving a specific outcome or working to a specific brief, and it has defined parameters on where it can learn from.

Since 2023, the most visible advances have been in so-called foundation models and generative AI. However, in recruitment, these are still applied as “narrow” systems that work to well-defined briefs and domains such as CV parsing, matching or content creation.

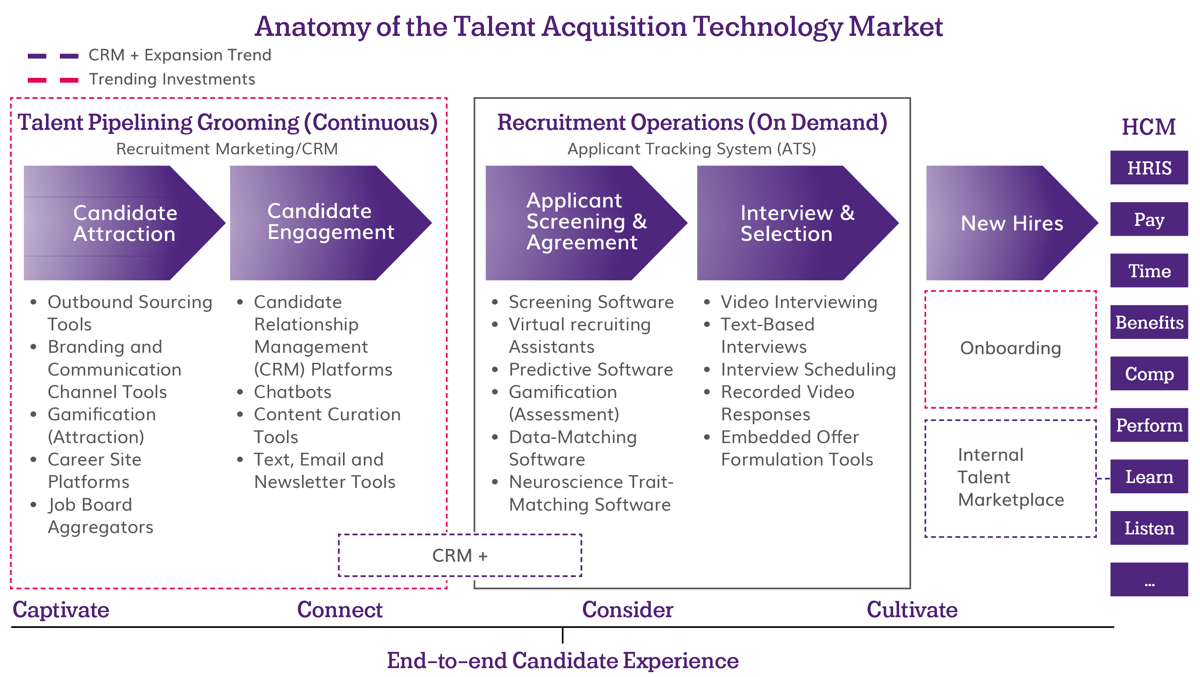

Many HR teams have implemented technology such as Applicant Tracking Systems (ATS) and Candidate Relationship Management systems (CRM) to automate manual processes and maintain contact with candidates throughout the application journey. Now AI provides new opportunities to support teams, improving efficiency and hiring speed.

More recent deployments increasingly sit on top of existing ATS/CRM platforms, using AI to orchestrate tasks like sourcing, matching, and scheduling rather than replacing core systems.

The AI tools that are currently available can help HR professionals in various ways, including:

Generative AI tools like ChatGPT can write full job descriptions from just a few prompts, screen adverts for biased language, and suggest new ways of advertising to broaden the talent you can attract. Generative AI tools can now also suggest alternative titles and targeting strategies to reach under-represented talent and skills-adjacent candidates, supporting more skills-based hiring.

AI tools can screen CVs to provide you with a shortlist based on your criteria. They can even schedule, run and screen virtual interviews, effectively allowing you to interview every candidate. Newer tools combine CV data, application responses and sometimes assessment results to generate ranked shortlists and fit scores, but they still require clear criteria, human review and ongoing monitoring to avoid amplifying bias or overlooking non-traditional profiles.

AI can scan available market data and provide dynamic offer recommendations based on location, salary and role. Some newer platforms also factor in internal equity, skills scarcity and predicted acceptance likelihood, though these models are only as reliable as the underlying pay and market data.

AI chatbots provide a new automated way for employers to stay in contact with candidates throughout the journey. This is important, both to maintain a positive impression and engage prospective employees to prevent dropout during the process. Recent case studies show that AI-powered chat and automated updates can materially reduce candidate dropout in high-volume hiring, provided candidates can easily escalate to a human when needed.

Want to read this offline or share with a colleague? Simply fill in some details to download a copy.

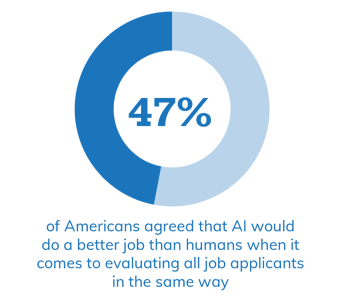

AI has the potential to bring huge benefits to both recruiters and prospective employees. In a recent survey, 47% of Americans agreed that AI would do a better job than humans when it comes to evaluating all job applicants in the same way.

The benefits for business:

Time is saved in the early stages of recruitment on tasks such as sifting, screening and scheduling. Automating these tasks allows HR professionals to focus on time with people rather than processes. Recent implementations report substantial reductions in time spent on CV screening and interview scheduling, as well as shorter time-to-shortlist, especially in high-volume roles.

Researchers from the London School of Economics found that AI hiring improves efficiency in hiring by being faster, increasing the fill rate for open positions, and recommending candidates with a greater likelihood of being hired after an interview. This provides a better candidate experience and reduces the time a seat remains empty, improving productivity.

Personal preferences and unconscious bias can easily become a part of the recruitment process. AI has the potential to apply consistent criteria and reduce some forms of human bias, and some studies have found more diverse shortlists under AI-assisted hiring than under fully human processes. However, more recent research has also highlighted that AI tools can reproduce or even amplify historical bias if they are trained on skewed data or if they are not regularly audited. For example, a 2024 study of state-of-the-art resume screening models found that CVs with White-associated names were preferred in around 85% of tests, while those with Black male names were never preferred over equivalent CVs with White male names, underlining the need for active safeguards rather than assuming that algorithms are neutral.

AI screening improves the quality of the match between employer and candidate, leading to better retention. More recent tools focus less on exact CV keyword matches and more on skills, adjacent experience and internal mobility, which can improve match quality and retention when combined with good human judgment.

AI chatbots can be used to answer candidate questions and keep candidates up to date throughout the process, maintaining engagement and reducing the risk of dropout. While AI-driven updates and chat can improve responsiveness, research since 2023 also shows that some candidates are wary of opaque automation in hiring, so combining automation with clear explanations and accessible human support is key.

AI can reduce certain manual errors (such as missed CVs or inconsistent screening questions), but newer studies highlight that it can introduce systemic errors if data, models, or implementation are flawed. Robust testing and monitoring remain essential.

Dr Grace Lordan, Associate Professor in Behavioural Science at LSE and Founding Director of The Inclusion Initiative

Although there are many benefits for employers, businesses must also consider the candidate’s experience. Studies have shown that job applicants who have had a poor candidate experience are less likely to seek employment there again, are more likely to share their bad experience with others, and are less likely to purchase products from that company in the future.

In 2023, a Pew Research study found that the vast majority of respondents were unaware that AI was being used in the hiring process, and two-thirds said they would not want to apply for a position if they knew AI was used to help make hiring decisions. The same study found that people strongly opposed AI making the final hiring decision by a ten-to-one ratio. Moreover, they are more sceptical that AI would be an improvement over humans when it comes to thinking outside the box; 44% said AI would do a worse job when it comes to seeing potential in job applicants who may not perfectly fit the job description.

Research from the London School of Economics backed up this negative response to using AI in hiring. The main reasons for this negative view were privacy concerns and a less-personal, more-robotic experience. Overall, organisations that deploy AI hiring were seen as less attractive than those hiring through humans.

In the years since this research, AI has become more visible in consumer tools and workplaces. Yet, studies suggest many candidates still do not fully understand when or how AI is used in hiring, which can erode trust if organisations are not transparent.

Economic stagnation has increased the competition for roles, and with higher volumes of applications to review, more organisations have turned to AI to help teams overwhelmed with applications. However, this has created a less personal experience for candidates, with automated responses and encounters with AI tools, such as chatbots and AI interviewers, on the rise.

While the majority of candidates reacted positively to the increased efficiency, 20% of candidates said the process worsened, citing depersonalisation and feeling as though they were interacting with a machine. Recent press coverage has also highlighted increased frustration, especially amongst younger jobseekers, who report receiving automatic rejections just a few moments after application and struggling to interact with the AI interviewers through technical issues and stilted, machine-like questioning.

More recent commentary has highlighted that candidate perceptions can improve when employers clearly explain the role of AI in their process, emphasise that humans make final decisions, and provide options for human review.

As with any new technical implementation, there are challenges in onboarding and integrating a new piece of software alongside existing systems. Companies must also allocate time and resources to training the tool on existing datasets.

AI technology is often enterprise software aimed at large organisations, with pricing not made transparent. Businesses will need to research available technology options or engage specialist technology consultants to advise on their options.

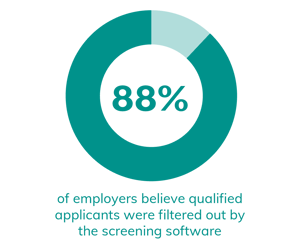

Research by Harvard Business School found that 88% of employers believe qualified applicants were filtered out by the screening software. There are multiple reasons why this could happen, such as missing keywords on applications, gaps in work history being viewed negatively by technology and bias based on a lack of previous performance data for similar candidates. Providing a bloated job description could also lead to qualified candidates being missed as the shortlisting criteria become too extensive.

Research by Harvard Business School found that 88% of employers believe qualified applicants were filtered out by the screening software. There are multiple reasons why this could happen, such as missing keywords on applications, gaps in work history being viewed negatively by technology and bias based on a lack of previous performance data for similar candidates. Providing a bloated job description could also lead to qualified candidates being missed as the shortlisting criteria become too extensive.

Multiple studies cast doubt on some applications of AI. An NYU study of two AI platforms found significant variances in outcome based on resume format and the presence of LinkedIn URLs. There are also concerns about the evidence behind video screening techniques based on expression and tone of voice analysis. Independent researchers from the University of Cambridge’s Centre for Gender Studies created a tool which showcases how a simple thing like lighting can impact the results of these assessments.

As newer systems based on large language models are introduced into CV analysis and interviewing, early research suggests they can sometimes hallucinate skills or over-confidently rate candidates, so validation on real-world data remains critical.

As with any tool, AI can only perform to the level of the data it can access. For example, features such as dynamic salary offers will be limited by the openness of market data which may make the granularity of specific locations and roles unreliable.

As AI uses predictive analysis: to infer future performance, it could perpetuate existing bias. For example, AI, trained on data showing a pattern of promoting men with an Ivy League college degree, could read this as a predictor of success and prioritise those applications above those of women or those with a different educational path but relevant experience.

An example of how this bias can manifest is shown in US research conducted in 2020 which found that facial-analysis technology created by Microsoft and IBM, among others, performed better on lighter-skinned subjects and men.

This example cited in the Guardian is a reminder of how crucial it is to be mindful of how AI tools are trained:

In 2017, Amazon quietly scrapped an experimental candidate-ranking tool that had been trained on CVs from the mostly male tech industry, effectively teaching itself that male candidates were preferable. The tool systematically downgraded women’s CVs, penalising those that included phrases such as “women’s chess club captain”, and elevating those that used verbs more commonly found on male engineers’ CVs, such as “executed” and “captured”.

Since these early high-profile cases, additional research has shown that recommendation systems in hiring and performance evaluation can nudge human decision-makers toward biased outcomes, even when they think they are using AI as a “second opinion”. This has shifted the focus from “is the algorithm biased?” to “how do humans and algorithms interact in practice?”

Want to read this offline or share with a colleague? Simply fill in some details to download a copy.

AI has enormous potential to improve efficiency and effectiveness in Talent Acquisition, but the most significant shift now emerging is how organisations understand and access talent.

Rather than relying solely on job titles and keyword matches, newer systems are increasingly focused on skills, adjacent experience and internal mobility, mapping the capabilities of both internal and external talent pools to support more skills-based hiring decisions.

At the same time, many employers are beginning to deploy AI “assistants” that can orchestrate sequences of tasks such as sourcing, shortlisting, scheduling and candidate communication under human supervision, allowing recruiters to spend more time on relationship-building, complex judgment calls and strategic workforce planning. While some vendors claim their tools can predict success in a role using a wide range of candidate data, including social media profiles and online behaviour, these approaches raise significant questions about privacy, data protection, fairness and proportionality.

Many organisations are therefore choosing to restrict AI inputs to information that candidates provide directly during the recruitment process and to job-relevant, quality-checked external data, balancing innovation with trust and compliance.

AI in recruitment is still surrounded by considerable hype, fuelled by high-profile advances and the popularity of ChatGPT, Claude and Perplexity, amongst others, but it is also now embedded in many day-to-day hiring processes.

For most organisations, the question is no longer whether AI will play a role in talent acquisition, but how to use it effectively, fairly, and aligned with their values. That means recognising both the clear gains of faster screening and scheduling, richer insights, smoother communication and the very real risks around data quality, bias, privacy, transparency and over-reliance on automated recommendations.

As the technology matures, we can expect fewer standalone tools and more deeply integrated capabilities within core HR platforms, accompanied by clearer regulation, guidance and expectations from candidates, regulators and society.

Organisations that benefit most will be those that treat AI as assistive technology, invest in the quality and governance of their data, and equip recruiters and hiring managers to interpret AI outputs critically rather than accept them at face value. They will also be ready to explain and justify how AI is used in their hiring decisions. There are tangible advantages to using AI today, particularly for employers managing large applicant volumes or complex talent needs.

But those advantages come with responsibilities: to validate vendor claims, to monitor tools for unintended bias and errors, to limit the use of data to what is relevant and proportionate, and to maintain meaningful human oversight at key decision points. By approaching AI with curiosity, rigour and humility, organisations can harness its strengths while protecting candidates, employees and their own reputation, turning a period of rapid change into an opportunity to build more efficient, more inclusive recruitment for the future.

No, at least not yet! AI has a long way to go before it reaches human-like capacity. Experts define this stage as narrow AI, where we must provide a clear brief and point the technology towards data that can help provide a solution. AI can’t undertake original research and has yet to be proven to analyse an interview reliably.

However, certain aspects can help overstretched teams, especially in the screening and sifting phase of recruitment. Even the most advanced systems deployed in practice today are designed to support and augment recruiters rather than replace them, and regulatory trends are reinforcing the expectation of meaningful human involvement in hiring decisions.

Small businesses can benefit from using generative AI tools such as ChatGPT to create job descriptions and adverts, and suggest avenues to advertise to widen the reach of the role. Businesses that have implemented ATS and CRM systems to help with recruitment should speak to their current providers about AI use within their tools, as there may be features that aren’t being utilised.

Yes, but AI use in recruitment needs to be carefully considered. Employers must validate the claims made by vendors concerning ethics and bias and be transparent with candidates on how and why AI is used in the recruitment process.

AI can support more consistent application of criteria and help highlight patterns of bias, but it can also reproduce or amplify existing inequities if trained on skewed data or left unmonitored. The evidence since 2023 points to AI as a tool that can either help or harm fairness, depending on how it is designed, governed, and used.

As AI technology is in its early stages, there are several risks, including legal risks related to ownership and copyright of publicly available data, privacy and data protection concerns from prospective candidates, and ethical concerns about fairness and equality in the recruitment process. Recent debates have also highlighted risks around a lack of transparency, limited explainability of complex models, and over-reliance on AI recommendations by human decision-makers.

Leverage Talent Intelligence by using data-driven insights to anticipate talent needs, understand workforce capabilities, and predict future skills trends, enabling strategic decisions to meet business objectives and outpace competition.

© Talent Insight Group 2025